Transformer and diffusion models are not an incremental upgrade to the CNN/RNN/GAN era. They are a step function. Elad Gil, who built ML systems at Google and sold Mixer Labs to Twitter, argues that treating modern AI as a continuum with prior ML waves is the same category error as calling an airplane a car with wings. Before transformers, nearly every ML startup failed. Value accrued to incumbents because capabilities were too weak to open new markets.

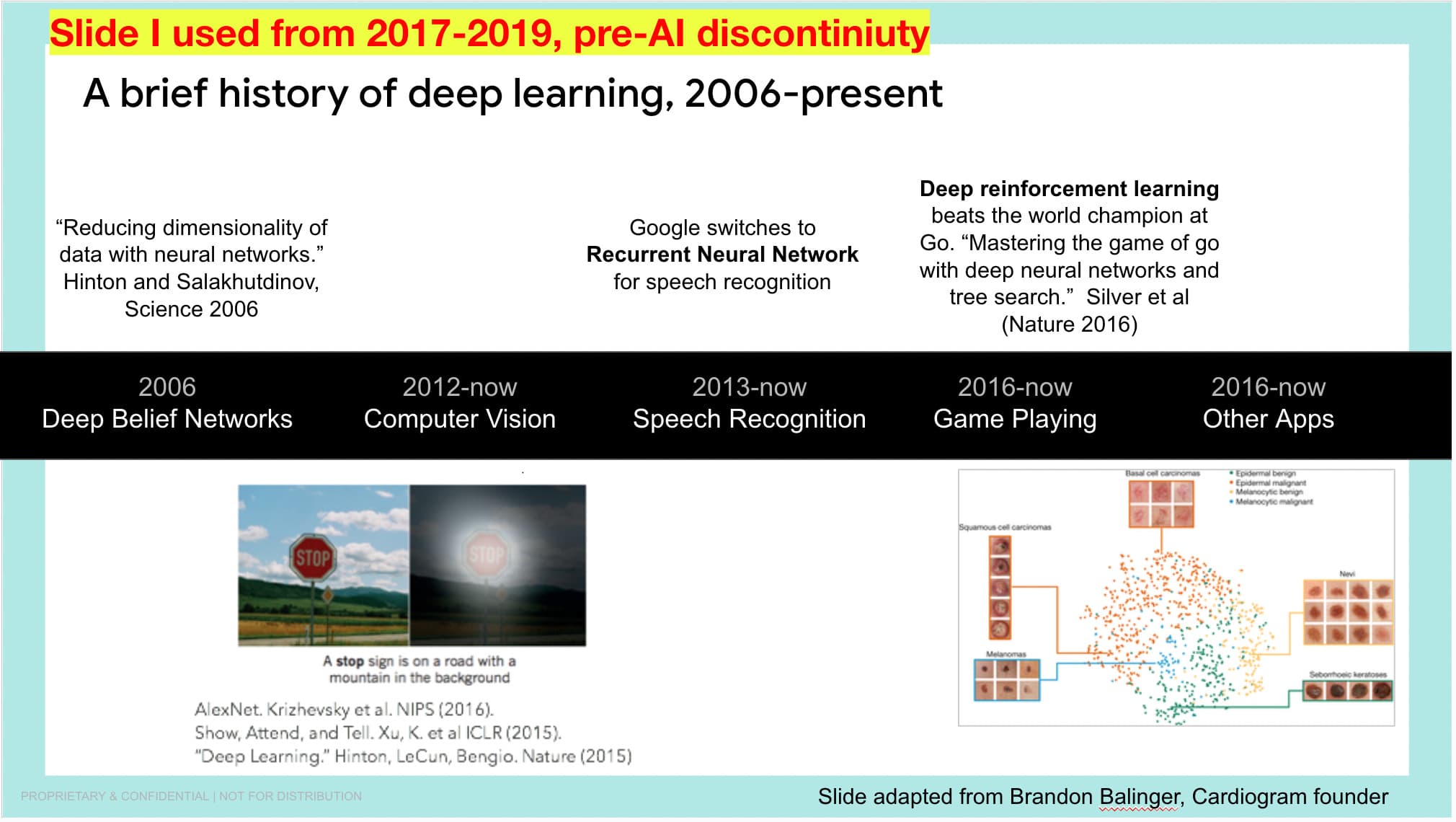

That framing matters for anyone allocating capital or building products. Gil spent 2017 to 2019 using a slide that accurately reflected a world where CNN and RNN-based startups could not compete with incumbent data advantages. He now uses a different slide. The original piece shows both. The contrast is the argument, and it is worth seeing directly.

The piece does not predict winners. It draws a hard line between two technological eras and demands readers stop conflating them. If your mental model of AI risk, opportunity, or competition was formed before 2020, Gil's framing is a useful forcing function for updating it.

[READ ORIGINAL →]