ChatGPT has been live for roughly 9 months. GPT-4 for 6. Calls to regulate AI are already loud, citing misinformation, bias, existential risk, and chemical attacks. None of these harms have materialized at any documented frequency, and the logical chain from AI to, say, a chemical attack remains unbuilt. The argument for urgent regulation is, right now, theoretical.

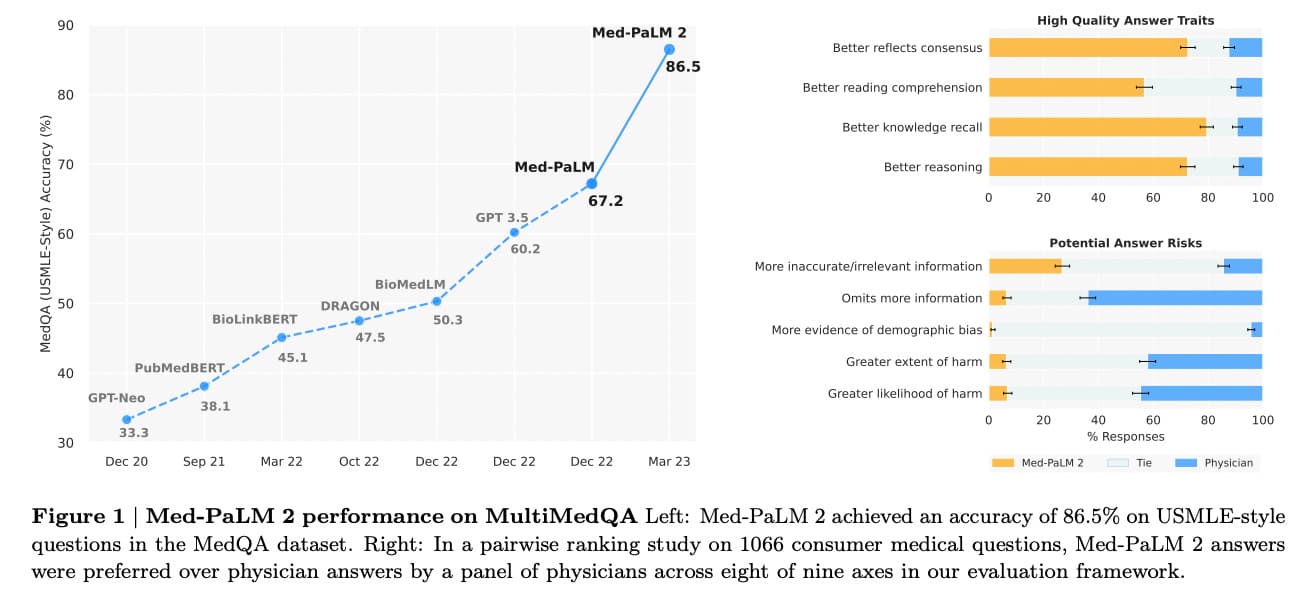

The more important story is what premature regulation would kill. Google's Med-PaLM2 already outperforms medical experts on clinical benchmarks, with evidence that adding more physician input actually degrades the model. That is one data point in a much larger case the author makes for AI as a tool for global health and educational equity. Regulation at this stage risks locking in incumbents, pushing economic gains overseas, and making the technology government-centric instead of user-centric. The author notes, without softening it, that the loudest voices calling for regulation are the largest incumbents, who benefit directly from rules that freeze the competitive landscape.

The full piece is worth reading for its breakdown of which regulatory interventions are actually defensible, such as export controls on advanced chips to foreign adversaries, versus which are incumbency protection dressed as safety. The author's framework for separating legitimate risk from regulatory capture is the reason to read past the conclusion.

[READ ORIGINAL →]