General Intuition raised $133.7 million at seed stage to build World Models, systems that learn to predict the near future from action-labeled gaming clips. Co-written by Not Boring's Packy McCormick and General Intuition co-founder Pim De Witte, this piece is the most comprehensive public account of where World Models stand today, covering history, theory, competing architectures, and the open questions no one has answered yet.

The field is moving fast and the money is following. Fei-Fei Li's World Labs raised $1 billion. Yann LeCun's AMI raised $1.03 billion. World Models headlined NVIDIA GTC. The argument here is not that LLMs reach superintelligence, but that World Models produce superhuman, complementary machines that operate in physical space, doing things humans cannot or will not do.

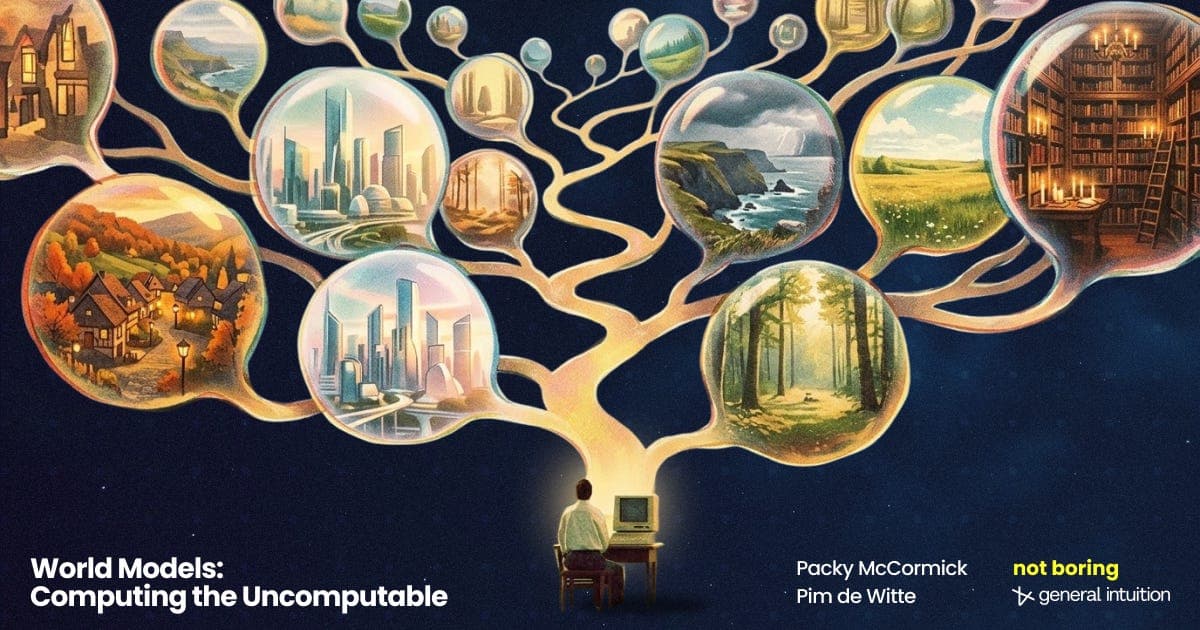

Read the original for the architecture debates. General Intuition has a specific approach, and the piece is worth your time precisely because De Witte presents the tradeoffs of every method, including his own, without declaring a winner. The future of embodied AI agents trained in simulated environments is not yet settled, and this essay maps every live branch of that decision tree.

[READ ORIGINAL →]