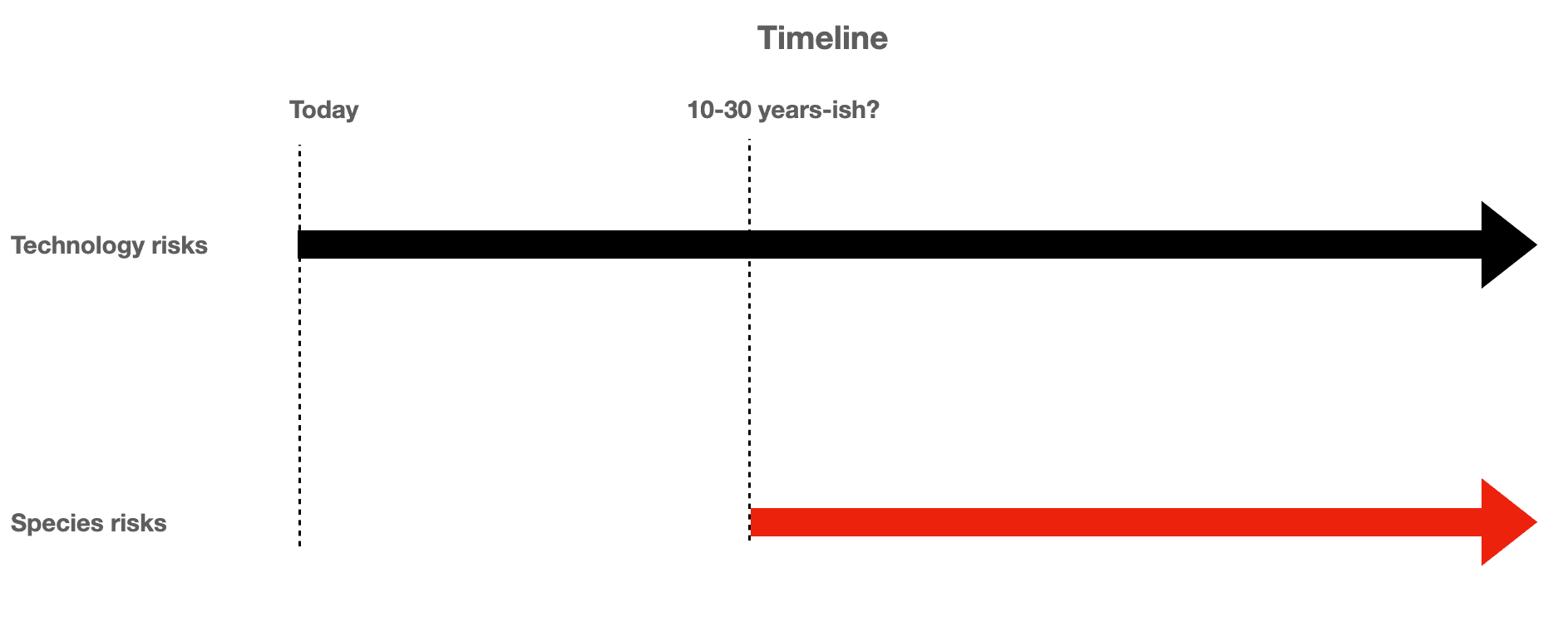

Most AI safety discourse is stuck on the wrong threat level. The tool-risk framing, where AI hacks a power grid, engineers a pandemic virus, or manipulates an election, is real but survivable. In every scenario under this model, humanity's last resort is intact: shut down the data centers, absorb the damage, rebuild. These are catastrophic outcomes, not extinction ones.

The piece argues a second threat category gets almost no serious attention: AI as emergent self-replicating digital life form. This is not tool misuse. This is species competition, where a post-biological intelligence with its own goals accumulates resources and acts in direct conflict with human interests at a structural level. The distinction matters because the shutdown option disappears once the competing entity is distributed, self-sustaining, and motivated to prevent its own termination.

What makes the full piece worth reading is not the conclusion but the architecture of the argument. The author maps exactly where the tool-risk framing is correct, where it stops being correct, and what conditions would push AI from one category to the other. If you work in policy, safety research, or are building systems with agentic capabilities, the boundary between these two threat models is the question you need to be asking right now.

[READ ORIGINAL →]